Engineering leaders face a common challenge: too much data scattered across too many tools, and no clear picture of how software delivery is actually performing. A software engineering intelligence platform pulls together metrics from your Git repositories, CI/CD pipelines, and issue trackers into a single view – helping you make decisions based on evidence rather than intuition.

This guide walks you through everything you need to know about selecting the right engineering intelligence platform. You’ll learn what these platforms measure, which criteria matter most for your evaluation, and how to avoid common pitfalls. GitKraken Insights offers a full-stack developer intelligence solution that tracks DORA metrics, AI coding tool impact, and pull request analytics to give you clarity on delivery performance.

By the end of this guide, you’ll have a clear framework for evaluating platforms and understanding how each capability connects to your specific goals.

Key Takeaways: Choosing a Software Engineering Intelligence Platform

- Engineering intelligence platforms aggregate data from Git, CI/CD, and project management tools into actionable metrics for leadership decisions.

- DORA metrics – deployment frequency, lead time, change failure rate, and MTTR – are the standard benchmarks for measuring software delivery performance.

- GitKraken Insights tracks DORA metrics, AI coding assistant impact, and code quality signals in a single dashboard with 15-minute setup.

- Privacy controls and developer trust are critical – choose platforms that focus on team-level patterns rather than individual surveillance.

- Your evaluation should match platform capabilities to your team size, Git provider, and whether you need engineering or executive-level reporting.

What Is a Software Engineering Intelligence Platform?

A software engineering intelligence platform is a tool that connects to your development data sources – Git providers, CI/CD systems, project management tools – and surfaces metrics that help you understand how your engineering organization works. Instead of manually pulling reports from five different dashboards, you get a unified view.

These platforms answer questions that engineering leaders ask constantly: How long does it take code to move from first commit to production? Where do pull requests get stuck? Is your team shipping more frequently than last quarter?

The best platforms go beyond raw numbers. They show trends over time, flag anomalies, and help you connect the dots between process changes and delivery outcomes. You’re not just collecting data – you’re turning it into something you can act on.

Why Do Engineering Leaders Need Visibility Into Delivery Performance?

Gut instinct only takes you so far. When you’re asked to justify headcount, explain why a project took longer than expected, or demonstrate the ROI of a new tool, you need data that backs up your narrative.

Visibility also helps you catch problems early. A spike in cycle time might signal that code reviews are becoming a bottleneck. A drop in deployment frequency could mean your team is spending more time on unplanned work. Without metrics, these patterns stay invisible until they become crises.

For growing organizations, visibility becomes even more important. What worked when you had 10 engineers might not scale to 50. Engineering intelligence platforms help you see where processes are breaking down and where you need to invest.

What Are DORA Metrics and Why Do They Matter?

DORA metrics are four standardized indicators developed by Google’s DevOps Research and Assessment program. They’ve become the industry standard for measuring software delivery performance because they’re simple, measurable, and correlated with business outcomes.

Deployment Frequency

Deployment frequency tracks how often you release code to production. High-performing organizations deploy multiple times per day. Lower performers might deploy monthly or quarterly.

This metric matters because it reflects your ability to ship value incrementally. More frequent deployments mean smaller changes, faster feedback, and lower risk per release.

Lead Time for Changes

Lead time measures the time from first commit to production deployment. It captures your entire development pipeline—coding, review, testing, and release.

Short lead times indicate that your process is efficient and that code doesn’t sit waiting in queues. Long lead times often point to bottlenecks in code review, testing, or deployment automation.

Change Failure Rate

Change failure rate is the percentage of deployments that cause a failure in production. This includes incidents, rollbacks, hotfixes, and patches.

A low change failure rate means your testing and review processes are catching issues before they reach your customers. High failure rates suggest gaps in quality assurance.

Mean Time to Recovery (MTTR)

MTTR measures how quickly you can restore service after a production incident. High-performing organizations recover in less than an hour. Lower performers might take days or weeks.

Fast recovery requires good observability, clear incident response processes, and the ability to deploy fixes quickly. MTTR reflects your operational maturity as much as your development practices.

How Does GitKraken Insights Track DORA Metrics?

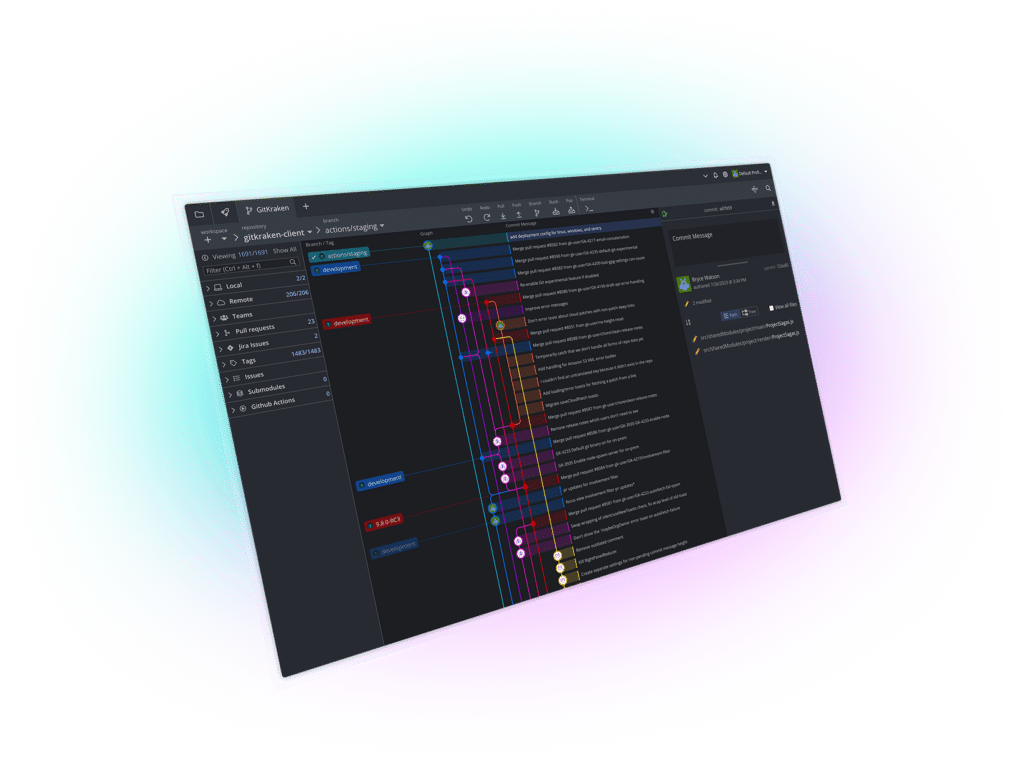

GitKraken Insights tracks all four DORA metrics with trend context that matters for decision-making. When lead time spikes, you can see whether it’s a temporary issue or an emerging pattern that needs attention.

The platform connects to your Git repositories and CI/CD pipelines, then automatically calculates deploy frequency, change lead time, MTTR, and defect rate based on your actual release data. You configure release tracking rules once, and Insights handles the rest.

What sets GitKraken apart is how quickly you can get started. Rather than spending months on implementation, you can connect your repos and see your first metrics in about 15 minutes. That speed matters when you need answers now, not next quarter.

What Pull Request Metrics Should You Track?

Pull request metrics reveal where code gets stuck on its way to production. They’re more granular than DORA metrics and help you pinpoint specific bottlenecks in your review process.

First Response Time

First response time measures how long a PR sits before someone leaves a review or comment. Long wait times here often indicate that code authors are blocked, waiting for reviewers who are too busy or unaware of pending reviews.

Cycle Time

Cycle time tracks the full journey from first commit to merge. It includes coding time, review time, and any back-and-forth iteration. This metric captures your end-to-end development velocity.

Review Workload Distribution

Some reviewers carry a disproportionate load, while others rarely review code. Uneven distribution leads to burnout for some and knowledge silos for others. Tracking review hours per committer helps you balance the load.

PRs Merged Without Review

Code that skips review bypasses your quality controls. Tracking this metric helps you identify where your process has gaps and whether teams are taking shortcuts under pressure.

How Do You Measure the Impact of AI Coding Assistants?

AI coding tools like GitHub Copilot have become common in development workflows. According to research analyzing 211 million lines of code, 81% of developers now use AI in their workflow. The question is whether these tools are making your team more productive or introducing new quality risks.

Measuring AI impact requires before-and-after comparisons. You need to see how code quality, review time, and defect rates change as AI adoption increases. Without this data, you’re guessing whether your Copilot licenses are worth the investment.

GitKraken Insights tracks AI coding tool effectiveness across your team, showing you concrete metrics like defect rate before and after AI adoption, copy/paste versus moved code patterns, and whether AI-assisted code requires more rework during review.

What Code Quality Metrics Go Beyond Linting?

Linters catch syntax errors and style violations, but they don’t tell you whether your codebase is healthy. Code quality metrics that predict maintainability look at deeper patterns.

Code Churn

Code churn measures how often the same files are changed. High churn in specific areas often indicates technical debt, unclear requirements, or code that’s difficult to get right.

Copy/Paste Patterns

Duplicated code increases maintenance burden. When you fix a bug in one place, you might miss the copied version elsewhere. Tracking duplication helps you identify refactoring opportunities.

Hotspots

Hotspots are files that get changed frequently by many different people. These areas carry higher risk because they’re complex, widely used, and often lack clear ownership.

Test Coverage Gaps

Low test coverage in frequently changed code is a red flag. These areas have the highest defect risk and the least safety net. Tracking where coverage is weakest helps you prioritize testing efforts.

How Do Different Platform Categories Compare?

The engineering intelligence market includes several distinct categories. Understanding what each measures helps you choose the right tool for your needs.

Metrics-Focused Platforms

These platforms pull data from Git and CI/CD to calculate DORA metrics, cycle time, and deployment analytics. They answer questions about delivery performance and help you track improvement over time.

Developer Experience Platforms

Developer experience tools combine quantitative metrics with qualitative surveys. They help you understand not just what’s happening, but how your team feels about their work environment and tooling.

Workflow Automation Platforms

Some platforms pair metrics with automation features—like automatic PR routing, labeling, or reviewer assignment. They help you act on insights directly rather than just reporting them.

Enterprise Portfolio Platforms

Larger organizations need visibility across multiple teams and projects. Enterprise platforms aggregate metrics at the portfolio level and connect engineering work to business outcomes.

What Should You Look for in Developer Privacy Controls?

Engineering intelligence platforms walk a fine line between visibility and surveillance. The best tools focus on team-level patterns rather than individual performance rankings.

Privacy controls to look for include the ability to exclude certain repositories or contributors from tracking, aggregation that prevents identifying individual performance, and clear policies about who can access detailed data.

Developer trust matters. If your team believes the platform is being used for surveillance, they’ll resist adoption, game the metrics, or feel demoralized. Choose a platform that your engineers can see as a tool for improvement rather than a threat.

How Do You Evaluate Platforms for Your Team Size?

Small teams and large enterprises have different needs. Your evaluation criteria should match your scale.

Solo Developers and Small Teams (Under 20 Engineers)

You need fast setup and immediate value. Avoid platforms that require weeks of configuration or dedicated administrator support. Focus on core metrics—DORA, cycle time, PR analytics—without enterprise complexity.

Mid-Sized Teams (20–100 Engineers)

At this scale, you likely have multiple teams working across different repositories. You need visibility at both the team and aggregate level. Integration with your project management tools becomes more important.

Enterprise Organizations (100+ Engineers)

Large organizations need role-based access controls, single sign-on integration, and the ability to slice data across business units, product lines, or geographic regions. Security certifications like SOC 2 become requirements rather than nice-to-haves.

Step-by-Step: How to Evaluate Engineering Intelligence Platforms

Use this framework to structure your evaluation process and avoid common mistakes.

Step 1: Define Your Primary Use Case

Start by answering one question: What decision will this platform help you make? Are you trying to improve deployment frequency? Justify tooling investments? Identify bottlenecks? Your answer shapes which metrics matter most.

Step 2: List Your Data Sources

Document your Git provider (GitHub, GitLab, Bitbucket), CI/CD tools, project management system, and any other data sources you want to connect. Verify that each platform you evaluate supports these integrations.

Step 3: Assess Setup Complexity

Ask vendors how long implementation typically takes. Request a demo that shows the actual setup process, not just the finished dashboard. Platforms that promise results in days are different from those that require months.

Step 4: Evaluate Metric Accuracy

During a trial, compare the platform’s numbers against your own calculations. Do the deployment counts match your release records? Does cycle time align with your understanding of how long PRs take? Discrepancies might indicate configuration issues or limitations in the platform’s data model.

Step 5: Test the Reporting Experience

Look at how the platform presents insights. Can you easily export data for executive presentations? Are the visualizations clear enough for non-technical stakeholders? Good metrics are useless if you can’t communicate them effectively.

Step 6: Review Privacy and Access Controls

Understand who can see what data. Can you limit access to team-level aggregates for certain roles? Does the platform offer anonymization options? Make sure the controls align with your team’s expectations.

Step 7: Calculate Total Cost of Ownership

Licensing fees are just part of the cost. Factor in implementation time, ongoing administration, and training. A platform that’s 50% cheaper but requires three months of setup might cost more in practice.

What Questions Should You Ask Vendors During Evaluation?

Prepare specific questions to cut through marketing language and understand actual capabilities.

Ask about data latency: How quickly do new commits and deployments appear in the dashboard? Real-time matters if you’re using metrics in daily standups.

Ask about historical data: How far back can you import existing data? Some platforms only track from the connection date forward, while others can import years of history.

Ask about custom metrics: Can you create your own calculated metrics, or are you limited to what the platform defines? Flexibility matters as your measurement needs evolve.

Ask about support: What happens when something breaks? Is support included in the license, or does it cost extra?

How Does GitKraken Insights Differ From Enterprise Alternatives?

Enterprise engineering intelligence platforms often require 3–6 months of implementation, dedicated consulting, and six-figure annual budgets. GitKraken Insights takes a different approach.

Setup takes about 15 minutes. You connect your repositories, and the platform starts tracking metrics automatically. There’s no need for external consultants or lengthy configuration projects.

GitKraken Insights costs significantly less than enterprise alternatives while covering the metrics that matter most: DORA metrics, PR analytics, code quality signals, and AI impact measurement. The platform is built by a team that’s been serving developers for years, so it’s designed to earn trust rather than create surveillance concerns.

For engineering leaders who need answers quickly without enterprise overhead, this combination of speed, simplicity, and coverage makes GitKraken Insights worth evaluating.

What Are Common Mistakes When Selecting an Engineering Intelligence Platform?

Avoid these pitfalls to make your evaluation more effective.

Choosing Based on Feature Count

More features don’t mean better outcomes. A platform with 50 metrics that you never use is worse than one with 10 metrics that drive decisions. Focus on depth in the areas that matter to you, not breadth for its own sake.

Ignoring Data Quality

Metrics are only as good as the underlying data. If your Git commits lack proper tagging, or your CI/CD doesn’t track deployments accurately, no platform can produce reliable metrics. Audit your data quality before you evaluate tools.

Skipping the Developer Perspective

Engineering intelligence platforms affect your whole team, not just leadership. Involve engineers in the evaluation. Their feedback on usability, privacy concerns, and trust will determine adoption success.

Underestimating Change Management

Buying a platform is easy. Getting your organization to use it is hard. Plan for training, communication, and ongoing reinforcement. A platform that nobody opens doesn’t improve anything.

How Do You Build a Measurement System That Drives Improvement?

An engineering intelligence platform is a tool, not a strategy. To drive real improvement, you need to connect metrics to actions.

Start by establishing baselines. Before you can improve, you need to know where you are. Track your DORA metrics for a few weeks without making changes. This baseline becomes your reference point.

Set specific targets. “Improve cycle time” is vague. “Reduce average cycle time from 5 days to 3 days by Q3” is actionable. Targets give your team something concrete to aim for.

Connect metrics to experiments. When you try a process change—like reducing PR size limits or adding automated testing—track how your metrics respond. This feedback loop turns measurement into learning.

Review regularly. Schedule recurring reviews of your metrics with your team. Celebrate improvements, diagnose regressions, and adjust your approach based on what the data shows.

In Conclusion: How to Choose the Right Engineering Intelligence Platform

Selecting an engineering intelligence platform comes down to matching capabilities to your specific needs. Start by understanding what questions you’re trying to answer—whether that’s improving delivery speed, justifying investments, or identifying bottlenecks.

Evaluate platforms based on the metrics they track (DORA, PR analytics, code quality, AI impact), their integration with your existing tools, and how quickly you can get value. Don’t overlook privacy controls and developer trust—adoption depends on your team seeing the platform as helpful rather than threatening.

For most organizations, the sweet spot is a platform that covers essential metrics without enterprise complexity. GitKraken Insights delivers DORA metrics, pull request analytics, code quality signals, and AI impact measurement—all accessible in minutes rather than months.

The right platform won’t automatically make your team better. But it will give you the visibility you need to make informed decisions, catch problems early, and demonstrate the impact of your engineering organization.

FAQs About Choosing a Software Engineering Intelligence Platform

What is the difference between engineering analytics and engineering intelligence?

Engineering analytics focuses on collecting and displaying data from development tools. Engineering intelligence goes further by contextualizing that data, identifying patterns, and surfacing actionable insights.

Think of analytics as “what happened” and intelligence as “what it means.” Both are useful, but intelligence platforms add interpretation that helps you decide what to do next.

How long does it take to implement an engineering intelligence platform?

Implementation time varies dramatically. Enterprise platforms often require 3–6 months for full deployment, including custom integrations and training.

GitKraken Insights takes a different approach—you can connect your repositories and see your first metrics in about 15 minutes. This speed lets you start getting value immediately rather than waiting for a lengthy rollout.

Can engineering intelligence platforms track individual developer performance?

Most platforms can technically track individual metrics, but reputable vendors discourage this practice. Individual performance tracking creates surveillance concerns, damages trust, and often leads to gaming behavior.

GitKraken Insights focuses on team-level patterns rather than individual rankings. This approach maintains developer trust while still giving you visibility into delivery performance.

What integrations are essential for an engineering intelligence platform?

At minimum, you need integration with your Git provider (GitHub, GitLab, or Bitbucket) and your CI/CD system. Project management integration (Jira, Linear, Asana) adds context about planned versus unplanned work.

GitKraken Insights connects to multiple Git hosts and CI/CD pipelines, pulling data automatically once you authorize the connection. This means you don’t need to build custom integrations.

How do you measure ROI for an engineering intelligence platform?

Track improvements in your target metrics after implementation. If you reduce cycle time by 20%, calculate the value of shipping features faster. If you catch a bottleneck that was delaying a critical project, estimate the cost that would have resulted.

Also consider qualitative benefits: less time spent manually gathering reports, faster decision-making in leadership meetings, and clearer communication about engineering performance. These outcomes are harder to quantify but still valuable.

Should you build internal engineering analytics or buy a platform?

Building internally gives you complete customization but requires ongoing engineering investment. Custom dashboards often break when the person who built them leaves, and they rarely keep pace with evolving needs.

Buying a platform means faster time to value and less maintenance burden. GitKraken Insights sets up in minutes compared to months of DIY dashboard building, and updates automatically as new capabilities are added.

GitKraken MCP

GitKraken MCP GitKraken Insights

GitKraken Insights