AI coding tools are everywhere now. According to the 2025 DORA Report, 90% of developers use AI tools at work, up from 76% the year before. But here’s the uncomfortable question engineering leaders are asking: Is all this AI adoption actually making your team better?

The answer isn’t as straightforward as vendor dashboards suggest. Individual developers might feel more productive, yet organizational delivery metrics often stay flat – or worse, decline. This guide walks you through the engineering efficiency metrics that separate genuine AI impact from vanity numbers. GitKraken Insights helps you track these metrics automatically, connecting AI adoption data to real delivery outcomes so you can prove ROI to leadership.

You’ll learn how to establish baselines, interpret DORA metrics in the context of AI-assisted development, and build a measurement system that drives actual improvement – not just activity reports.

Key Takeaways: Proving AI Impact: DORA and Velocity Metrics Guide

- DORA metrics measure software delivery performance, but AI adoption requires additional context like code quality and rework rates to tell the full story.

- Establish pre-AI baselines before rolling out tools so you can accurately measure the before-and-after impact on your team’s delivery velocity.

- GitKraken Insights tracks DORA metrics alongside AI impact measurements, giving you visibility into how AI tools affect code quality and team productivity.

- Developer productivity signals like cycle time and PR pickup time reveal bottlenecks that raw velocity numbers alone can’t show you.

- Shipping velocity trends matter more than single-sprint snapshots – track patterns over three to five sprints for reliable forecasting and planning decisions.

What Are DORA Metrics and Why Do They Matter for AI-Assisted Development?

DORA metrics are four standardized performance indicators developed by Google Cloud’s research team to measure software delivery effectiveness. They’ve become the industry benchmark for engineering performance because they predict better organizational outcomes and team well-being.

The four core DORA metrics include deployment frequency, lead time for changes, change failure rate, and mean time to recovery. Each tells you something different about how efficiently your team delivers working software to production.

When AI enters the picture, these metrics take on new significance. AI tools can accelerate code production, but that acceleration often exposes weaknesses downstream in testing, review, and deployment. You need DORA metrics to see whether faster coding actually translates to faster, more reliable delivery.

How Do the Four DORA Metrics Work Together?

Deployment frequency measures how often you ship code to production. High-performing teams deploy on demand – sometimes multiple times per day. This metric shows whether your release process supports rapid iteration or creates bottlenecks.

Lead time for changes tracks the duration from first commit to production deployment. It reveals how long code sits waiting in queues – waiting for review, waiting for testing, waiting for approval. AI might help you write code faster, but if your lead time stays the same, those gains disappear into wait states.

Change failure rate shows the percentage of deployments that cause incidents or require rollbacks. This is where AI’s impact gets complicated. The 2025 DORA Report found that AI adoption has a negative relationship with software delivery stability. Faster code production without robust testing practices leads to more failures.

Mean time to recovery (MTTR) measures how quickly you fix problems when they occur. Strong teams recover fast because they have good observability, clear ownership, and practiced incident response processes.

Why Traditional DORA Metrics Miss AI’s Real Impact

Traditional DORA metrics were designed before AI coding assistants existed. They measure delivery outcomes but stay blind to how AI shapes the code itself. Your deployment frequency might rise, but you can’t tell whether that’s because of AI, better processes, or just more micro-commits.

The 2025 DORA Report introduced “rework rate” as a fifth metric specifically to address this gap. Rework rate tracks how often you push unplanned fixes to production – a blind spot in the original four metrics that becomes critical when AI generates a significant portion of your codebase.

Research from Faros AI analyzing over 10,000 developers found what they call the “AI Productivity Paradox”: AI coding assistants dramatically boost individual output – 21% more tasks completed, 98% more pull requests merged – but organizational delivery metrics stay flat.

How to Establish Pre-AI Baselines for Accurate Measurement

You can’t prove AI impact without knowing where you started. Establishing baselines before rolling out AI tools gives you the “before” picture needed for meaningful “after” comparisons.

Start by capturing at least 30 days of baseline data across all DORA metrics. Record your team’s deployment frequency, average lead time, change failure rate, and MTTR. These numbers become your control group for measuring AI’s effect.

What Baseline Metrics Should You Capture Before AI Adoption?

Beyond DORA metrics, capture developer productivity signals that reveal how work flows through your system. Track cycle time (how long items take from start to finish), PR pickup time (how long PRs wait before review begins), and review turnaround time.

Document your team’s code quality indicators: defect rates, test coverage, code churn patterns, and the ratio of new code to maintenance work. These baselines help you spot whether AI accelerates technical debt accumulation or genuinely improves output quality.

Record team composition and capacity during your baseline period. Knowing who was on the team, their experience levels, and any unusual circumstances (holidays, on-call rotations, major projects) helps you interpret changes accurately later.

How Long Should Your Baseline Period Be?

A minimum 30-day baseline captures enough variation to establish reliable averages. But if your team experiences significant seasonal patterns or project-based cycles, extend to 60 or 90 days for more accurate representation.

Account for anomalies during baseline collection. Major incidents, team changes, or unusual project demands can skew your numbers. Either extend your baseline to smooth out these bumps or document them clearly so you can adjust interpretations accordingly.

Developer Productivity Metrics That Reveal AI’s True Effect

DORA metrics show delivery outcomes, but developer productivity metrics reveal the underlying dynamics. These signals help you understand why your DORA numbers look the way they do – and where AI makes a real difference.

Cycle time breaks down into active work time and wait time. AI tools primarily affect active work time – the hours developers spend actually writing code. But if your cycle time is dominated by wait time (waiting for reviews, waiting for builds, waiting for approvals), AI won’t move the needle much.

Which Developer Productivity Signals Matter Most?

PR cycle time shows how long pull requests take from creation to merge. This metric captures the full review and approval workflow. Watch for whether AI-generated code takes longer to review because reviewers need extra time to verify AI suggestions.

Pickup time measures delay between PR submission and first review comment. Long pickup times indicate either insufficient reviewer availability or poor workload distribution. AI doesn’t help here – this is a process and staffing problem.

Review depth tracks how thoroughly code gets examined before merge. Metrics like comments per PR and changes requested per PR reveal whether reviews are substantive or rubber-stamp exercises. With AI-generated code, review depth becomes critical for catching subtle issues that “look right but aren’t quite.”

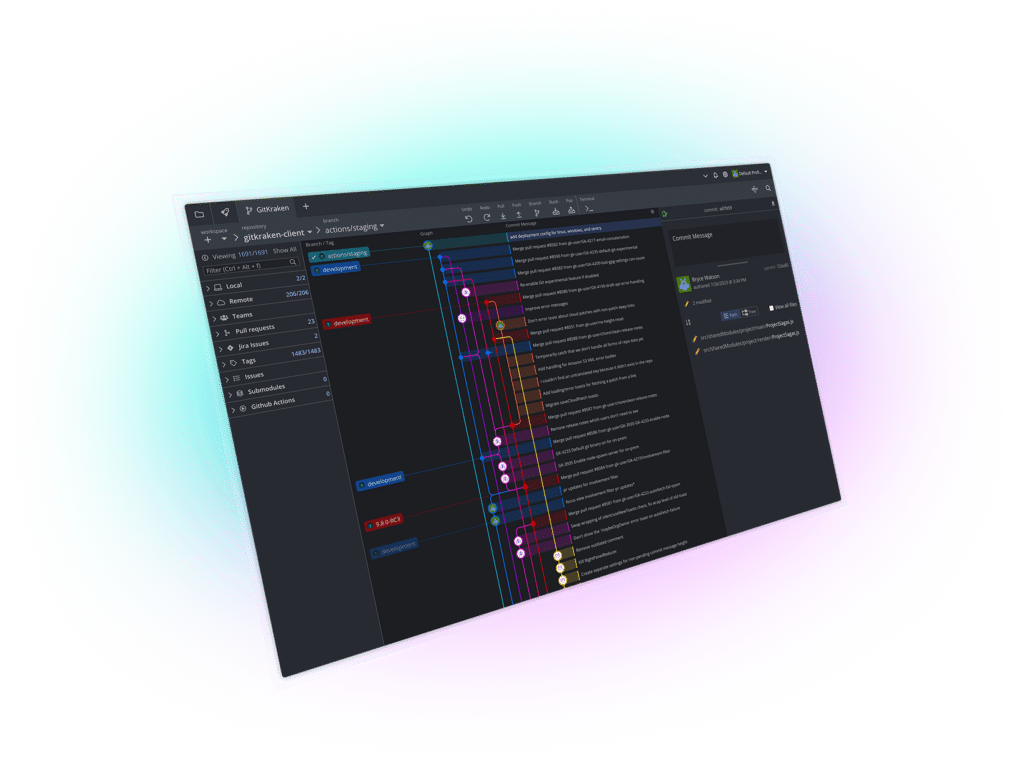

GitKraken Insights tracks these metrics automatically, connecting your pull request analytics to DORA metrics so you can see how review patterns affect overall delivery performance.

How Do You Measure Developer Experience in AI-Assisted Teams?

Developer experience surveys capture what metrics alone can’t tell you. Ask your team about their satisfaction with AI tools, where they still hit friction, and what would make them more effective.

The 2025 DORA Report found that organizations with strong developer experience practices get more benefit from AI adoption. Happy, well-supported developers use AI more effectively and produce better outcomes. Frustrated developers might use AI as a crutch that creates problems downstream.

Track the “verification tax” – time developers spend reviewing and debugging AI suggestions. Stack Overflow’s 2025 Developer Survey found that 66% of developers cited “AI solutions that are almost right, but not quite” as their biggest frustration. Time saved on typing often returns as time spent auditing.

How to Track Shipping Velocity Trends Over Time

Shipping velocity measures how much value your team delivers over time. Unlike sprint velocity (which counts story points), shipping velocity focuses on features, fixes, and improvements reaching customers. This distinction matters because AI can inflate story point completion without increasing actual delivered value.

Track velocity trends over rolling three-to-five sprint windows rather than looking at single sprints. Individual sprints fluctuate based on holidays, sick days, and sprint-specific challenges. Multi-sprint averages smooth out these bumps and reveal true capacity.

What Shipping Velocity Patterns Indicate AI Success?

Healthy AI adoption shows velocity increasing while quality metrics hold steady or improve. Your team ships more features without corresponding increases in defect rates or production incidents. This pattern indicates AI genuinely accelerates delivery rather than just accelerating code production.

Watch for velocity increases paired with rising technical debt indicators. If you’re shipping faster but code churn increases, test coverage drops, or defect rates climb, AI might be accelerating your way into a maintenance crisis.

GitKraken Insights shows velocity alongside code quality metrics so you can spot these patterns early. When lead time spikes, you can see whether it’s a temporary issue or an emerging trend that needs attention.

How Do You Separate AI Impact from Other Velocity Factors?

Velocity changes have many causes: team composition shifts, project complexity changes, process improvements, tooling upgrades. Isolating AI’s contribution requires controlling for these variables.

Compare velocity trends across teams with different AI adoption levels. If your AI-enabled team outpaces similar teams without AI tools, that’s stronger evidence than comparing before-and-after numbers that might reflect other changes.

Track AI-specific indicators like the percentage of code touched by AI assistants, acceptance rates for AI suggestions, and time spent on AI-assisted versus manual coding tasks. These granular metrics help attribute velocity changes to AI rather than confounding factors.

Engineering Management Analytics: Building Your Measurement Dashboard

Effective AI impact measurement requires a dashboard that connects individual metrics into a coherent picture. Your dashboard should answer three questions: Are we delivering better software? Are we delivering it faster? Is AI contributing to these outcomes?

Start with DORA metrics as your foundation. Display deployment frequency, lead time, change failure rate, and MTTR with trend lines showing direction over time. Add context annotations for major changes like AI tool rollouts, team changes, or process shifts.

What Metrics Belong on Your AI Impact Dashboard?

Layer in AI-specific metrics above your DORA foundation. Track AI tool adoption rates across your team, showing which developers use AI tools and how frequently. Monitor code quality metrics segmented by AI-assisted versus manual code to compare outcomes.

Include developer experience indicators: survey scores, self-reported productivity assessments, and qualitative feedback themes. These human signals complement quantitative metrics and often surface issues before they appear in delivery numbers.

Show the connection between metrics. Your dashboard should make it easy to see whether high AI adoption correlates with better DORA scores or whether certain patterns predict problems.

How Do You Present AI ROI to Leadership?

Leadership wants to know whether AI investments deliver value. Translate your metrics into language they understand: time saved, features delivered, incidents avoided, and cost implications.

Calculate the ROI formula: compare AI tool costs against measurable benefits like reduced cycle time, faster incident recovery, or increased deployment frequency. Be honest about what you can and can’t attribute directly to AI.

Present trends rather than snapshots. A single month of improved metrics might be noise; three quarters of sustained improvement demonstrates real value. Show the trajectory and explain what’s driving it.

How to Interpret DORA Metrics When AI Adoption Increases

AI acts as an amplifier, according to the 2025 DORA Report. Strong teams use AI to become stronger. Struggling teams find that AI highlights and intensifies their existing problems. Understanding this dynamic helps you interpret your metrics correctly.

If your DORA metrics improve after AI adoption, examine whether the improvement comes from AI or from organizational capabilities that also correlate with successful AI use. Teams with strong automated testing, mature version control practices, and fast feedback loops tend to both adopt AI successfully and show good DORA scores.

Why Might DORA Metrics Get Worse After AI Adoption?

Declining change failure rates or rising MTTR after AI adoption usually signal that AI accelerated code production faster than your quality controls could handle. More code means more potential bugs. Without proportional investment in testing and review, quality suffers.

The 2025 DORA Report specifically notes that AI adoption has a negative relationship with software delivery stability. This doesn’t mean AI is bad – it means AI requires corresponding investment in quality assurance practices to realize its benefits.

If your metrics decline, don’t blame AI and abandon it. Instead, strengthen the organizational capabilities that enable AI success: automated testing, clear review standards, incident response processes, and developer support systems.

What Organizational Capabilities Amplify AI Benefits?

The DORA research identifies seven organizational capabilities that determine whether AI helps or hurts: internal platform quality, workflow clarity, team alignment, automated testing maturity, version control practices, feedback loop speed, and documentation quality.

Teams with strong internal developer platforms get more from AI because they have infrastructure to catch AI-generated issues before production. Clear workflows ensure AI-assisted code goes through appropriate review gates. Good documentation helps AI tools generate more accurate suggestions.

Invest in these capabilities alongside AI tools. The greatest return on AI investment comes not from the tools themselves, but from the organizational systems that support them.

How GitKraken Insights Helps You Track AI Impact on Your Team

GitKraken Insights connects to your Git repositories and CI/CD pipelines to surface engineering metrics automatically. Instead of building custom dashboards or maintaining spreadsheet trackers, you get DORA metrics, pull request analytics, and AI impact measurements in one view.

Setup takes about 15 minutes. Connect your repositories, and Insights begins tracking immediately. Past-month activity appears within hours; full-year history is ready within one to two days.

What Makes GitKraken Insights Different for AI Measurement?

GitKraken Insights tracks before-and-after metrics showing AI coding tool effectiveness across your team. You see code quality changes, review time improvements, and developer satisfaction – not just adoption numbers.

The platform shows DORA metrics with trend context that matters for decision-making. When lead time spikes, you understand whether it’s a temporary issue or an emerging pattern requiring intervention.

Code quality metrics go beyond linting. You see churn rates, copy/paste patterns, tech debt hotspots, test coverage trends, and file complexity. These indicators predict maintainability and show whether AI is creating sustainable code or future maintenance burdens.

How Does GitKraken Insights Support Engineering Leadership Reporting?

GitKraken Insights translates engineering performance into language leadership understands. You stop explaining what velocity means and start showing impact directly.

Pull request analytics show where PRs get stuck and why. You track pickup time, cycle time, review workload distribution, and post-merge defect rates. When leadership asks why delivery slowed, you have data-backed answers instead of speculation.

Developer experience surveys correlate sentiment with engineering metrics, connecting how your team feels to how they’re performing. This link helps you make the case for investments in developer experience that improve both satisfaction and delivery outcomes.

Step-by-Step: Building an AI Impact Measurement System

Building an effective measurement system requires planning, consistent execution, and iterative refinement. Follow these steps to create a framework that proves AI value and guides ongoing improvement.

Step 1: Define Your Measurement Goals

Start by clarifying what success looks like for your AI investment. Do you want faster feature delivery? Fewer production incidents? Reduced developer toil? Your goals determine which metrics matter most.

Write specific, measurable objectives. “Improve developer productivity” is vague. “Reduce average PR cycle time by 20% while maintaining current defect rates” is actionable. Clear goals make it easier to evaluate whether you’re succeeding.

Step 2: Establish Your Baseline Metrics

Capture at least 30 days of pre-AI baseline data across all metrics relevant to your goals. Document your DORA metrics, developer productivity signals, code quality indicators, and team satisfaction scores.

Record contextual information: team size, composition, major projects underway, unusual circumstances. This context helps you interpret changes accurately and avoid false conclusions.

Step 3: Implement Tracking Infrastructure

Set up automated tracking before rolling out AI tools. Manual tracking introduces measurement inconsistency and adds overhead that discourages ongoing monitoring.

Connect your Git repositories, CI/CD pipelines, and issue trackers to your measurement platform. GitKraken Insights handles this integration automatically, pulling data from your existing tools without requiring custom instrumentation.

Step 4: Roll Out AI Tools with Measurement in Mind

When introducing AI tools, create clear before-and-after boundaries. Document exactly when AI tools became available to which team members. This timeline lets you compare periods accurately.

Consider phased rollouts that create natural comparison groups. If half your team uses AI while half doesn’t initially, you can compare outcomes between groups rather than relying solely on before-and-after comparisons.

Step 5: Monitor and Iterate

Review your metrics weekly during initial AI adoption, then shift to monthly reviews once patterns stabilize. Look for both expected improvements and unexpected side effects.

Adjust your measurement approach based on what you learn. If certain metrics prove uninformative, replace them. If new questions emerge, add metrics to answer them. Your measurement system should evolve with your understanding.

Common Measurement Mistakes and How to Avoid Them

Many teams make predictable errors when measuring AI impact. Learning from these mistakes helps you build a more accurate and useful measurement system from the start.

Mistake: Focusing on Activity Instead of Outcomes

Lines of code, number of commits, and story points completed are activity metrics. They show that work happened but not whether that work created value. AI can inflate all these numbers without improving actual delivery.

Focus on outcome metrics instead: features shipped to customers, incidents prevented, customer issues resolved, time to production. These measures capture whether AI helps you deliver more value, not just produce more code.

Mistake: Comparing Individuals or Teams Unfairly

Never use AI impact metrics to compare individual developers or rank teams against each other. Different people and teams have different contexts: varying project complexity, code maturity, and skill sets. Cross-team comparisons encourage gaming and destroy collaboration.

Compare each team against its own historical baseline. Track whether teams improve over time, not whether they outperform other teams with different circumstances.

Mistake: Ignoring Qualitative Signals

Quantitative metrics tell part of the story. Developer feedback, code review comments, and retrospective discussions reveal context that numbers miss. A team might show good metrics while accumulating hidden problems that surface later.

Combine quantitative tracking with regular qualitative check-ins. Ask developers about their experience with AI tools, what’s working, and what creates friction. These conversations often identify issues before they appear in metrics.

Mistake: Declaring Success Too Early

Initial AI adoption often shows impressive short-term gains. Developers move faster on familiar tasks, and output metrics jump. But these gains sometimes reverse as technical debt accumulates, review queues grow, or maintenance burdens increase.

Track metrics over at least three to six months before drawing firm conclusions. Short-term improvements that don’t sustain indicate problems with how AI is being used, not evidence of lasting value.

In Conclusion: How to Prove AI Impact with Engineering Efficiency Metrics

Proving AI impact requires more than tracking adoption numbers or citing vendor benchmarks. You need a measurement system that connects AI tools to real delivery outcomes – one that shows whether your team ships better software faster, not just produces more code.

Start with DORA metrics as your foundation, then layer in developer productivity signals and code quality indicators. Establish clear baselines before AI rollout so you can make meaningful before-and-after comparisons. Track trends over time rather than reacting to single-sprint snapshots.

Remember that AI amplifies your existing strengths and weaknesses. If your metrics don’t improve – or get worse – after AI adoption, look at the organizational capabilities that enable AI success: testing practices, review processes, feedback loops, and developer support systems.

GitKraken Insights gives you the visibility to track all these metrics in one place, connecting AI adoption data to DORA metrics and code quality indicators. With the right measurement system, you can prove AI’s real value to leadership and make data-driven decisions about where to invest next.

FAQs About Proving AI Impact: DORA and Velocity Metrics Guide

What are the four DORA metrics and how do they relate to AI impact?

The four DORA metrics are deployment frequency, lead time for changes, change failure rate, and mean time to recovery. They measure how effectively your team delivers software to production.

AI affects these metrics differently. It often improves lead time by speeding up coding, but can negatively impact change failure rate if quality controls don’t keep pace with faster code production.

How do you measure developer productivity in AI-assisted teams?

Measure developer productivity through cycle time, PR pickup time, review turnaround, and code quality indicators. These signals reveal how work flows through your system and where bottlenecks occur.

GitKraken Insights tracks these metrics automatically, connecting pull request analytics to delivery outcomes so you can see how AI affects your team’s workflow patterns.

What baselines should you establish before AI tool adoption?

Capture at least 30 days of baseline data for DORA metrics, developer productivity signals, and code quality indicators. Document team composition, ongoing projects, and any unusual circumstances.

These baselines create your control group for measuring AI’s impact. Without them, you can’t attribute changes to AI rather than other factors that shifted simultaneously.

Why do DORA metrics sometimes get worse after AI adoption?

DORA metrics decline when AI accelerates code production faster than quality controls can handle. More code means more potential bugs. The 2025 DORA Report found AI adoption correlates negatively with delivery stability.

This pattern indicates you need stronger testing, review processes, and quality gates – not that AI should be abandoned. AI amplifies existing capabilities, so strengthen those capabilities alongside AI tools.

How does GitKraken Insights help track AI’s impact on engineering teams?

GitKraken Insights tracks DORA metrics alongside AI-specific measurements like code quality changes, review time improvements, and developer satisfaction. It connects before-and-after data so you can prove AI’s real effect.

The platform shows trend context for decision-making – when metrics change, you understand whether it’s temporary noise or an emerging pattern that needs action.

What is shipping velocity and how does it differ from sprint velocity?

Shipping velocity measures value delivered to customers over time – features, fixes, and improvements reaching production. Sprint velocity counts story points completed regardless of whether that work ships.

AI can inflate sprint velocity by speeding up code production without increasing shipped value. Shipping velocity reveals whether AI genuinely accelerates delivery or just accelerates activity.

GitKraken MCP

GitKraken MCP GitKraken Insights

GitKraken Insights