Development velocity tells you how much work your engineering team completes during a sprint – but measuring it incorrectly can hurt more than it helps. When velocity becomes a performance target instead of a planning tool, you’ll see inflated estimates, gaming behaviors, and a number that means nothing. This guide shows you how to calculate velocity accurately, interpret the data responsibly, and turn measurement into genuine improvements.

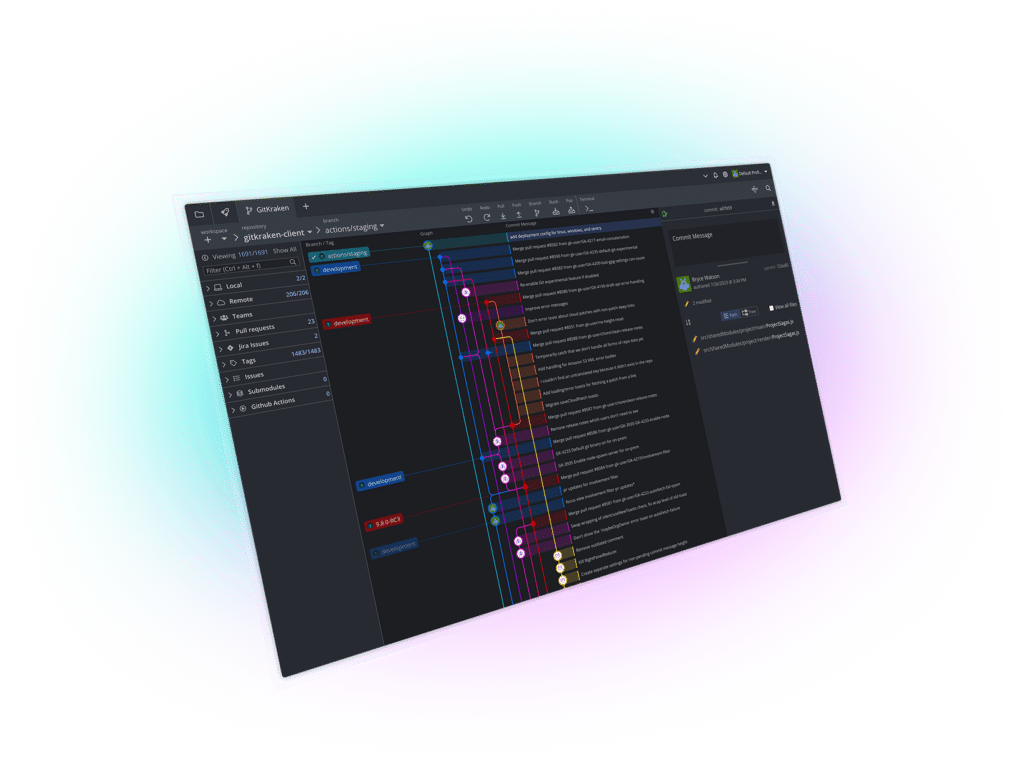

GitKraken Insights gives you engineering intelligence tools that connect velocity tracking to the broader picture: DORA metrics, cycle time analysis, and pull request insights. By the end of this guide, you’ll know how to establish a reliable velocity baseline, forecast with confidence, and avoid the pitfalls that derail so many measurement efforts.

Key Takeaways: Measure Development Velocity Without Gaming Metrics

- Velocity measures predictability rather than productivity – a consistent 25 story points per sprint beats erratic swings between 40 and 15.

- Story points capture relative complexity including effort, uncertainty, and technical risk, not hours worked or individual output.

- Track velocity across three to five sprints before treating it as a reliable baseline for forecasting and capacity planning.

- GitKraken Insights automatically tracks DORA metrics alongside velocity data, giving you context that isolated numbers can’t deliver.

- Never compare velocity between different teams or use it to evaluate individual developers – doing so destroys the metric’s planning value.

What Is Development Velocity in Agile?

Development velocity is the amount of work a software team completes during a sprint or iteration. You measure it by adding up the story points (or other units) assigned to fully completed user stories at the end of each sprint.

Velocity is a throughput metric, not a productivity score. It answers one question: how much work does this team typically finish? That baseline helps you forecast timelines, plan sprint capacity, and set realistic expectations with stakeholders.

A team with an average velocity of 30 story points can reliably commit to about 30 points each sprint. Knowing this prevents the overcommitment cycle that burns out developers and erodes trust with product managers.

Why Velocity Measures Predictability, Not Speed

High velocity doesn’t mean high performance. A team rushing through 50 points of buggy code delivers less value than a team completing 25 points of tested, production-ready features.

The real power of velocity lies in consistency. When your team delivers roughly the same number of points each sprint, you gain the ability to forecast accurately. Stakeholders stop asking “will it be ready?” and start asking “when will it be ready?” – a question velocity can answer.

Story Points vs Hours: Which Measurement to Use

Story points express relative complexity, not time. A five-point story represents roughly twice the effort of a three-point story, accounting for technical challenges, uncertainty, and testing needs.

Hours-based estimates fail because they ignore variability. The same feature might take a senior developer four hours and a junior developer twelve hours – but the complexity stays constant. Story points abstract away individual differences and focus on the work itself.

Most agile teams prefer story points because they encourage team discussion about complexity rather than debates about who works faster. The estimation conversation itself often surfaces hidden risks and dependencies.

How to Calculate Development Velocity Step by Step

Calculating velocity requires consistent definitions and disciplined tracking. Follow these steps to establish an accurate baseline that you can trust for planning.

Step 1: Define What “Done” Means for Your Team

Only fully completed work counts toward velocity. Create a definition of done that includes all exit criteria: code reviewed, tests passing, documentation updated, and ready to deploy.

A story that’s 90% complete contributes zero points to this sprint’s velocity. This rule prevents partial credit that distorts your numbers over time.

Step 2: Sum Completed Story Points at Sprint End

At the end of each sprint, add up the story points from every user story that meets your definition of done. If you completed stories worth 8, 5, 3, and 2 points, your sprint velocity is 18.

Record which stories contributed to each sprint. This history helps you spot patterns – certain types of work might consistently take longer than estimated.

Step 3: Calculate Rolling Average Velocity

Single-sprint velocity fluctuates too much to use for planning. Calculate a rolling average across your last three to five sprints to smooth out variations from holidays, sick days, or sprint-specific challenges.

The formula is simple: Total Story Points Completed ÷ Number of Sprints = Average Velocity. If your last four sprints delivered 18, 22, 16, and 20 points, your average velocity is 19 points per sprint.

Step 4: Use Velocity for Sprint Capacity Planning

With your average velocity established, sprint planning becomes data-driven. Pull stories from the backlog until their total points approach your average velocity – but don’t exceed it.

Adjust for known capacity changes. If a team member is on vacation, reduce your commitment proportionally. If you’re onboarding someone new, expect lower velocity until they ramp up.

How Story Points Estimation Works in Practice

Story point estimation works best when the entire development team participates. The conversation about complexity matters as much as the number you assign.

Choose a Reference Story as Your Baseline

Pick a completed story that everyone understands as your reference point – typically a small, well-defined piece of work. Assign it a middle-of-the-scale value like 3 or 5 points.

Every future estimate compares against this reference. Is the new story twice as complex? About the same? Significantly simpler? Relative comparison reduces the cognitive load of estimation.

Use the Fibonacci Scale for Story Point Values

The Fibonacci sequence (1, 2, 3, 5, 8, 13, 21) works well for story points because the gaps grow larger at higher values. This mirrors how uncertainty increases with complexity – you can’t precisely distinguish between a 14-point story and a 15-point story.

When estimates diverge widely during planning poker, that signals hidden complexity or unclear requirements. Use this as an opportunity to discuss before committing.

Split Stories That Exceed Your Threshold

Any story above 13 points likely contains multiple pieces of work. Large stories introduce estimation risk and make sprint progress harder to track.

Break big stories into smaller, independently valuable chunks. Each chunk should deliver something testable, even if it’s not user-facing. Smaller stories improve flow and give you better velocity data.

How Burndown and Burnup Charts Track Sprint Progress

Velocity tells you what happened after the sprint. Burndown and burnup charts show you what’s happening during the sprint, so you can course-correct before it’s too late.

What a Burndown Chart Shows

A burndown chart plots remaining work against time. The vertical axis shows story points still to complete; the horizontal axis shows sprint days. A guideline connects your starting point to zero at sprint end.

When your actual line stays close to the guideline, you’re on track. If the line flattens or rises, work is taking longer than expected or scope has increased.

What a Burnup Chart Shows

A burnup chart plots completed work against total scope. Two lines appear: one tracking completed points (rising over time) and one tracking total scope (ideally flat, but sometimes rising).

Burnup charts expose scope creep clearly. If the scope line keeps climbing, you can see exactly when new work was added and have the data to push back on mid-sprint changes.

When to Use Each Chart Type

Burndown charts work well for sprints with stable scope. They answer the question: are we going to finish what we committed to?

Burnup charts shine when scope changes during the sprint – they separate the “how much did we complete?” question from the “how much did the goal move?” question. Many teams find burnup charts more informative for stakeholder communication.

How to Avoid Metric Gaming and Dysfunctional Behaviors

Velocity measurement fails when it creates incentives that undermine the behaviors you want. Understanding these failure modes helps you prevent them.

Never Track Individual Developer Velocity

The moment you measure individual story points, collaboration dies. Developers stop helping teammates debug because that time doesn’t show up in “their” numbers. Senior developers avoid mentoring because code reviews don’t earn points.

Velocity is a team metric. Celebrate when the whole team delivers, not when one person closes the most stories. The developer who unblocks three teammates contributes more than the one who finishes one complex feature alone.

Never Compare Velocity Across Different Teams

Each team calibrates story points differently. A five-point story for one team might be an eight-point story for another, depending on their codebase, domain expertise, and estimation habits.

Comparing Team A’s 40 points to Team B’s 25 points tells you nothing meaningful. Focus on whether each team’s velocity is consistent and whether their forecasts prove accurate.

Never Use Velocity as a Performance Target

When velocity becomes a target, teams game it. They inflate estimates so hitting 30 points feels easier. They avoid hard problems because a five-point story looks the same as five one-point stories.

This is Goodhart’s Law in action: when a measure becomes a target, it stops being a useful measure. Use velocity for planning and forecasting, not for performance reviews or bonus calculations.

Watch for Story Point Inflation Over Time

If your team’s velocity keeps climbing without any real process improvements, you might have estimation drift. What used to be a three-point story gradually becomes a five-point story.

Periodically re-anchor your estimates against completed work. Compare new stories to completed ones of similar complexity to keep your scale calibrated.

How to Use DORA Metrics Alongside Velocity

Velocity alone doesn’t tell the full story. DORA metrics – developed by Google Cloud’s DevOps Research team – capture software delivery performance across four dimensions that complement velocity data.

The Four DORA Metrics Explained

Deployment frequency measures how often you release to production. Lead time for changes measures from first commit to deployment. Change failure rate tracks what percentage of deployments cause incidents. Mean time to recovery measures how quickly you restore service after failures.

High-performing teams deploy frequently (often multiple times per day), with short lead times (less than one day), low change failure rates (under 15%), and fast recovery times (under one hour). These benchmarks come from years of research across thousands of organizations.

Why Velocity and DORA Metrics Work Together

A team might have steady velocity but terrible deployment frequency – they’re finishing stories but code isn’t reaching production. Another team might deploy constantly but have high change failure rates – speed without stability.

GitKraken Insights tracks all four DORA metrics alongside pull request analytics and code quality indicators. This combination reveals whether your velocity translates into actual value delivery or just busy work.

How to Interpret Velocity Drops in Context

When velocity declines, DORA metrics help diagnose the cause. If lead time spiked, work might be stuck in code review. If deployment frequency dropped, something might be blocking releases.

Context prevents misdiagnosis. A velocity dip during a major refactoring effort might coincide with improving code quality metrics – that’s healthy, not a problem to fix.

How to Build a Velocity Measurement System That Improves Your Team

Measurement should drive improvement, not just reporting. Here’s how to build a velocity practice that makes your team better over time.

Use Retrospectives to Connect Metrics to Actions

Review velocity data during sprint retrospectives. Did you hit your forecast? If not, what blocked you? Were estimates accurate, or did certain stories surprise you?

Turn observations into experiments. If code reviews consistently slow you down, try pairing reviewers earlier. If certain story types always blow estimates, invest time in better upfront requirements.

Track Velocity Variance, Not Just Velocity

A team averaging 25 points with low variance (24, 26, 25, 24) is healthier than one averaging 25 with high variance (15, 35, 20, 30). Consistent teams can make reliable commitments; erratic teams can’t.

If your variance is high, investigate what causes the swings. External dependencies? Unclear requirements? Too much unplanned work? Each cause has different solutions.

Connect Velocity to Business Outcomes

Story points don’t measure value delivered – they measure effort expended. A 50-point sprint that ships features nobody uses accomplishes less than a 20-point sprint that solves real customer problems.

GitKraken Insights helps you connect engineering metrics to business impact by tracking how development work flows from commit to deployment. This visibility lets engineering leaders communicate progress in terms leadership understands.

How to Forecast Project Completion Using Velocity Data

One of velocity’s most practical uses is release forecasting. With reliable velocity data, you can answer “when will this be done?” with confidence instead of guesswork.

The Basic Forecasting Formula

Divide remaining story points by average velocity to estimate sprints remaining. If you have 100 points left in the backlog and your team averages 25 points per sprint, expect about four more sprints.

Add buffer time for uncertainty. Real projects encounter surprises – dependencies, technical debt, changing requirements. A forecast of “four to five sprints” is more honest than “exactly four sprints.”

How to Handle Uncertainty in Forecasts

Use velocity ranges instead of single numbers. If your velocity varies between 20 and 30 points, forecast completion between 3.3 and 5 sprints for that 100-point backlog.

The further out you forecast, the wider your range should be. Requirements will change, team composition might shift, and unknown unknowns will appear. Communicate ranges and update forecasts as you learn more.

How to Account for Team Changes in Forecasts

New team members need ramp-up time. Expect 50-70% of normal velocity during their first few sprints as they learn the codebase, processes, and domain.

Departures affect velocity even after someone leaves. Their domain knowledge, code ownership, and collaboration patterns take time to redistribute. Don’t assume velocity will immediately bounce back.

In Conclusion: Building Sustainable Velocity Practices for Your Team

Measuring development velocity accurately requires discipline: consistent definitions, honest estimation, and a commitment to using the data for planning rather than punishment. When you get it right, velocity becomes one of your most valuable tools for predictable delivery.

Start with the basics: define “done” clearly, calculate rolling averages across multiple sprints, and never use velocity to compare teams or individuals. Add context through burndown charts and DORA metrics so you understand not just how much work you finish, but how that work flows to production.

GitKraken makes velocity tracking part of a larger engineering intelligence picture – connecting story points to cycle times, deployment frequency, and code quality indicators. This integrated view helps you move from reactive firefighting to proactive improvement, building a measurement system that makes your team faster and more reliable over time.

FAQs About Measure Development Velocity Without Gaming Metrics

What is a good velocity for an agile team?

A good velocity is one that remains consistent over time, not a specific number. Your team’s ideal velocity depends on size, experience, and project complexity.

Focus on stability rather than hitting a particular target. A team consistently delivering 20 points is healthier than one swinging between 15 and 40.

How often should you recalculate development velocity?

Recalculate velocity at the end of every sprint to keep your rolling average current. Review trends monthly to spot patterns and address emerging issues.

GitKraken Insights updates velocity calculations automatically, eliminating manual tracking overhead while keeping your data fresh for planning.

Can velocity predict exact project completion dates?

Velocity can estimate completion ranges, not exact dates. Divide remaining work by average velocity for a rough timeline, then add buffer for unknowns.

The further out you forecast, the wider your range should be. Update predictions as you complete sprints and learn more about the remaining work.

Why shouldn’t you compare velocity between different teams?

Each team calibrates story points differently based on their codebase, tools, and estimation habits. Team A’s 40 points and Team B’s 25 points represent incomparable units.

Comparisons create pressure to game the numbers rather than improve actual delivery. Focus on each team’s consistency and forecast accuracy instead.

How does GitKraken Insights help with velocity tracking?

GitKraken Insights automatically tracks DORA metrics, pull request analytics, and delivery performance in a single dashboard. This context reveals whether velocity translates to real value.

The platform connects to your Git repositories and issue trackers, calculating metrics without manual data entry. You get engineering intelligence without building custom dashboards.

What causes velocity to drop suddenly?

Common causes include team member departures, new hires ramping up, unclear requirements, excessive meetings, or unplanned work like production incidents.

DORA metrics help diagnose the specific cause – if lead time spiked, work might be stuck in review. If deployment frequency dropped, releases might be blocked.

GitKraken MCP

GitKraken MCP GitKraken Insights

GitKraken Insights